The Invisible Comes to Light

We don’t have the snake’s ability to “see” heat given off by infrared light, and we can’t detect ultraviolet wavelengths as insects – and reindeers – can. Our eyes seem custom-made for the intense orange of a sunset and the violet clouds above it.

But light carries more information than the intensity and color we can see. When light waves pass through a transparent object or bounce off a surface, they bend and slow down, falling out of synch with each other. This decoupling is called a phase change, and our eyes can’t detect it. If we could see phase, we wouldn’t risk bumping into a glass door.

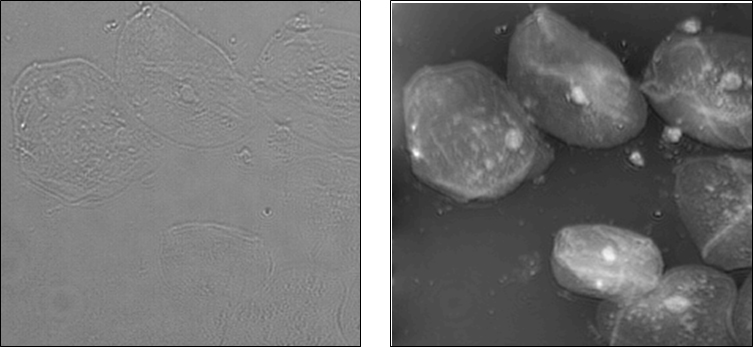

Down at the scale of optical microscopy, the inability to see phase change poses a serious problem. Many cell features are transparent, and researchers can’t detect them without using staining techniques that interfere with normal cell behavior. If they could detect phase changes, the transparent structures would come into view.

The limitation has spawned the active field of quantitative phase microscopy. Engineers develop imaging instrumentation to translate phase change into variations in light intensity that can be seen. The instruments can run $50,000 to $100,000.

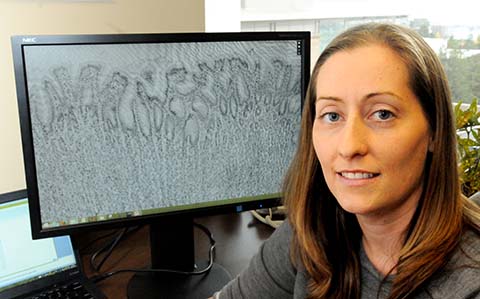

Laura Waller, director of Berkeley’s Computational Imaging Lab, takes a less costly approach to bring the invisible to light. She develops software that works with existing hardware to compute phase change.

By taking images from a few different focal planes or different “illumination angles,” she can determine the degree to which light waves are out of phase. “And through computation, you can reconstruct the surface structure or density that caused the phase change,” she says.

The computational approach offers a relatively inexpensive way to see and quantify what would otherwise be transparent. Hardware and software are designed at the same time to work together and achieve things neither can do alone, Waller says.

These concepts are making their way into commercial products such as the HDR feature of an iPhone camera.

“The camera will take pics at both long and short exposure times, and can use computational tricks to build up an image that has better range of intensities.”

Waller used computational imaging to capture intentionally out of focus images and digitally refocused them, and to recover 3D information from 2D images. The simplicity of the hardware means that the computational tools can be used with a range of imaging techniques, including X-rays.

“We can use ‘cheap and dirty’ optics to achieve the results of expensive, highly corrected microscopes,” she says. “Imaging labs probably already have the needed hardware. So, they would only need the software.”

“Laura’s research is a great example of data driven technology that enables new discovery,” says Chris Mentzel, program director of the Data Driven Initiative at the Gordon and Betty Moore Foundation.

“The impressive and innovative way she brings disciples together to bear on the problem – hardware integrated with statistical methods -- should be a huge gain in seeing and understanding cell compo-nents in action.”

The foundation recently awarded Waller $1.5 million over the next five years as part of its Data-Driven Discovery Initiative.

She was also recognized this year by the David and Lucile Packard Foundation as one of the nation’s most innovative early-career scientists and engineers. She was awarded a 2014 Packard Fellowship for Science and Engineering, providing $875,000 over five years to advance her computational imaging research.

To help move her technology into widespread use, Waller has received a UC Berkeley Bakar Fellowship -- research support and mentoring by experts to identify and establish patentable intellectual property and to help make the transition to the commercial world.

Waller wants to develop her strategy to view larger volumes of biological tissues and to carry out the computation and display rapidly enough that researchers can view their samples as a video in real-time.

“I think this is the kind of work that is likely to have impact in many areas of science and industry, but this early-stage research is not always supported by NIH or NSF. The Bakar program gives us time to focus on general tool development and also to figure out what applications will be most impactful.”

She sees the first applications in biological microscopy, but is also working with Berkeley colleagues and several semiconductor companies to refine measurements of phase edge effects in the photomasks that define different layers of integrated circuits.

“You’d like the masks to be infinitely thin, but actually they have some thickness. This creates phase changes that interfere with the printing process. By imaging the phase effects, we should be able to model them and correct them.”

She’s eager to see the computational imaging software used in research and industry. “If people are using our techniques all over the place, then we’ve really made a difference.”